Adam Haar Horowitz, together with Agnes Cameron, Ishaan Grover, Tim Robertson, Owen Trueblood and Gary Zhang, worked with CAST Visiting Artist Agnieszka Kurant at the 2017 Hacking Arts Festival on a “signature hack,” a new feature of the festival that pairs artists with student collaborators. The project, the Animal Internet, is now featured in SFMoMA’s Heavy Machinery publication. CAST spoke with Horowitz about this collaboration, his many other artistic projects and the ideas that drive his work.

Some background about Adam Haar Horowitz…

From a young age, Adam Haar Horowitz was involved in what he describes as “strange and macabre” off-Broadway theater productions, thanks to his playwright mother and her artistic circle of friends. He gained a solid foundation in the arts growing up in New York and collaborating with performance artists. When he came to MIT his junior year to study with MIT neuroscientist John Gabrieli in Brain and Cognitive Sciences, he brought his artistic training to bear on his research into human experience. Now, as a Master’s student in Pattie Maes’s Fluid Interfaces Group in the MIT Media Lab, he works at the intersection of neuroscience and engineering, and often creates art works that both generate and interrogate experience.

“I think there’s a lot of low-hanging fruit in the brain and cog sciences,” when it comes to source material for art, he says. “There’s so much in neuroscience that has the potential to reframe experience—revealing links between the body and brain; perceiving your own perception in vision, audition and touch; how you compose the world around you; how you view your consciousness….” He adds, “It regularly happens that I read neuro papers that just reframe how I see myself as a person acting in the world, because brain questions are practical, but also deeply personal and philosophical.”

His interest in people and in tools for self examination led him across several disciplines. He has worked in performance art with the Marina Abramovic Institute; in city government on behavioral economics, behavioral science and policy; and in researching meditation, metacognition, attention and mind wandering by conducting fMRI experiments. Some of the devices, interfaces and experiences that he produces have applications in the consumer market, while others are designed for purely aesthetic ends.

Q&A

How did your collaboration with Agnieszka Kurant begin?

I have been involved with Hacking Arts for awhile. The first year I was involved was heavy on engineering output and entrepreneurship, and had less purely artistic output. I joined Hacking Arts to further the mission of enticing people from art disciplines to engage with science and tech, and vice versa. This past year a third of the attendees were from RISD and we have a big MassArt contingent along with our MIT and CMU and other hackathon veterans, which changes the whole personality, composition and implicit value structure of the weekend together.

The “signature hack” serves that goal, displaying how those well versed in engineering work in tandem with somebody who really speaks and prioritizes the language of art. Agnieszka also speaks the languages of AI, swarm intelligence, and Mechanical Turk. She thinks deeply about the implications of mechanizing labor and the ethical questions it raises, which people in computer science or engineering using these tools consider, but perhaps, don’t specialize in. At Hacking Arts, it was exciting for us to engage with somebody who is using the same tools that we are, but who is deeply rooted in theory and who is thinking through a completely different lens.

We [Horowitz and team members Agnes Cameron, Ishaan Grover, Tim Robertson, Owen Trueblood and Gary Zhang] reached out to Agnieszka because her interests aligned with ours. She wanted to do a project, generally, which had to do with emergent intelligence, and with physicalizing the immaterial. Physicalizing the immaterial interests me both from a neuroscience and a performance art perspective.

The Animal Internet? On such a complex project, what different types of expertise were required?

If you want to do any sort of Internet aggregate decision making to machine actuation, you need expertise in computer science and electrical engineering, which can be prohibitively expensive and time consuming. I would expect that the project we did would take a month or two, if it wasn’t a concentrated team of awesome people who had each of the skills, and who were there for 24-hours, in hackathon mode, ready to sprint.

We had folks on the team who were hacking electronics for controllability [the ability to move a system around in its entire configuration space using only certain admissible manipulations], who were tying that controllability into swarm intelligence, who were tying that swarm intelligence into online behavior, who were aggregating that online behavior, who were doing Twitter sentiment analysis, and then caching all that sentiment analysis in order to change an AI landscape, which in turn changed resources, which then changed mechanical movement.

For animating the Internet, and for giving a body to invisible bodies—that requires some AI expertise, some electronic hacking expertise, some infrared communication expertise, just a lot of things. But the really cool thing about MIT is, it’s filled with 20-somethings who know how to do all these things, and they’re all so energetic and enabled and excited. And basically, if a project will be really cool, and it’ll only take us a day, and you go grab a few of them, mostly they’re down. Mostly they’re into it.

My job was to work with Agnieszka for a month, take that big idea and figure out, how it would fit into this space. What does it mean here? How do we materialize it? And what kind of different skills would it take to materialize each of the pieces? Then, identify who could fit all those bits, and who would really care about this project.

So, I helped with that translation from theme into project, and then aggregating a team who could do it in a day. Agnieszka fits beautifully into a technical landscape, and brings a lot of language that we’re lacking. On her part, there’s a lot of desire to create joy, to create questions, and to make some problems instead of solving them. I had the really fun job of just tapping into a bit of that pent-up energy, which I love.

Let’s talk about the robots themselves—the small swarm animals, or robot gerbils, and the larger one for which you hacked a toy robot cat. What was the process of both building the robots and then activating them?

We wanted to make two organisms. One organism is a swarm, because we’re interested in aggregate intelligence; its movement is controlled by Twitter sentiment analysis. The other one is a superorganism; it reacts to Mechanical Turk, which is an online platform for labor.

For the swarm, we needed lots of little things that could talk to each other. Swarm bots are very expensive. They make some at Harvard called kilobots, but it’s around $1,000 to get 10 of them. So we bought these really cheap toys called hexbugs. We hacked into the hexbugs and added an infrared communication device to them. Owen led this part. Then we did that with each of them so they could communicate with each other. With a night of hacking, we made those hexbugs into a swarm robot intelligence. Instead of buying swarm robots, we hacked toys to create a swarm that we can actually control.

And how are the swarm robots controlled by Twitter?

There’s an AI model called a Sugarscape that has a distribution of resources, which Agnes built. The resources are dynamically changed by Twitter, and then where the swarm goes is determined by the resources. So there’s an intermediary step, where Twitter hashtag sentiment changes resource distribution on this Sugarscape AI model, and that changes the movement of the hexbugs, which are now a swarm intelligence because they’ve been hacked.

Ishaan created something called a scraper for Twitter, which collects tweets tied to certain hashtags, and chose the hashtag #protest. Then you do sentiment analysis on those hashtags to see if they are emotionally high valence, low valence, high arousal or low arousal. This sentiment changes the hex bug swarm behavior, animating collective sentiment about #protest. Now you see sentiment swarm.

Could you explain how you made the superorganism from a toy cat robot?

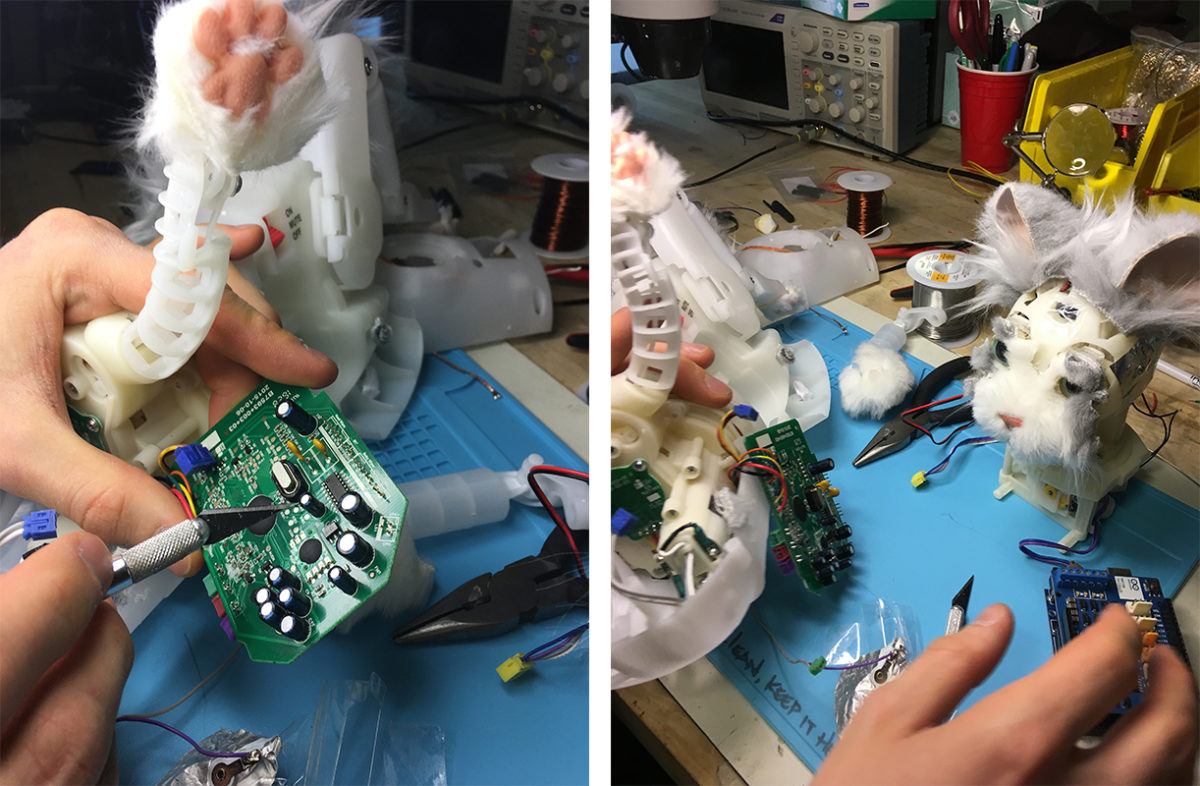

For the second one, we needed one organism which we could actuate [put into mechanical motion]; but again, getting a big robot with a lot of degrees of freedom is usually quite expensive, especially one that has some interface, like a GUI [graphical user interface] where you can control it. So instead, we just took Hasbro cats, and we opened them up; they have all these motor lines. I got to cut the motor lines with an X-ACTO knife, solder onto them, and then feed that out to a micro-controller, so you can create your own interface to control it.

We had quite a few legs and ears and mouths and arms and everything that we could control. Suddenly we had these two totally controllable robots. Again, we didn’t buy something with some actuation GUI. We just hacked into existing ones. Then, you collect Mechanical Turk responses to a questionnaire on sleepiness, stress and happiness, and the workers’ answers are synthesized into one overall behavior, which drives actuation of the hacked cats.

Basically, the project gives two ways to mechanize and materialize the digital and invisible. It materializes an intelligence, materializes collective emotion, and materializes an invisible Mechanical labor force opening these things up to new questions, new interactions—and giving back a body.

You’ve been involved in numerous art collaborations. Your projects range from automated belly dancing to meditation analysis to a platform for semi-lucid dreaming, Dormio. You also helped found an art collective here at MIT, the Hot Milks Foundation. What is it and what kind of work you do together?

One of the first things I did when I arrived at the Media Lab was help start an art collective; it has 12 or so people from ACT and seven of the Media Lab groups. I asked the Museum of Fine Arts if we could do a performance project last year, and since then the MFA has commissioned us to do six other projects.

For one event, we set up a lab in one of the galleries at the MFA in the guise of an Emotional Spa. The MFA asked us to come perform for a fundraiser, with this interesting implicit goal to make some art that makes people happy, gets them in a donating mood. My question was primarily, is the job of a museum really to show people something pleasing, something which is engendering joy? Personally, I think no, that point is trite. But if it’s not, why do museum donors want to be made happy, why is it a safe strategy to show pleasing work? And how much is artwork and intent about control after all?

But the idea of controlling emotion with art, and the fact that science and tech are entering the museum, that this museum is interested in doing facial recognition with cameras, and seeing who looks at, with what gaze, direction, for how long, at what painting, and what reaction—all that emotion engineering struck me as really interesting, as warranting some kind of futuring, some performative speculation.

The mechanization of the art experience combined with the desire for controllability, to me, points to a sort of dystopian inorganic aesthetic future. So we enacted that, with tools from neuroscience and emotion studies. To study an emotion, you often have to engender emotion; there’s a lot of work in how to make people feel certain things. And so basically, what I wanted to say is, this is a future in which I can take the feeling from the frame, without the frame itself, and give you the feeling directly. We won’t even need a museum. We’ll have a museum in a chair. For the piece, we had some electronic muscle actuation. We had some vibration. We had sounds. We had affective pictures. We had touch. We had the sharing of your experience. We had placebo pills. It was this full spectrum happiness spa where we pumped joy into people, to the degree where it was almost unpleasant, where it verged on some very fake feeling, but really overwhelming joy.

The feedback we got was a mix from, “Is this art, is this science?” There were a lot of those questions. There was a lot of confusion about how much was real or unreal. Many folks were like, “Did I enjoy my joy? Did you enjoy your joy? What was that?” It was perhaps a nice science-in-art intersection because the art museum is inviting in a tool that isn’t its own. We extrapolated it to an absurd future to ask if that’s the future you want to create, but even without that whole moralizing or didactic idea, it was a really weird experience to have these tools of emotion creation directed at you, that come from a lab and don’t ever leave a lab, all aggregated in a way they wouldn’t be if the goal was purely scientific.

Art contexts are already really interested in emotion generation. They just don’t do it mechanistically. They do it perhaps intuitively, or aesthetically. This is another route, a scientific route, to the same sort of engendering of emotion, and the question about how much controllability, how much quantified self and art you really want.

What are some ideas that animate your work and motivate you?

I am totally excited about bringing a corpus of knowledge in the arts and humanities to bear on the tool creation that’s happening in the engineering world. My ideas—whether they’re about chills, or interfacing with dreaming, or materializing auditory hallucinations—these are from yogas and art practices, from theologies and philosophies. My experience is that most really wonderful ideas about being human are things that people have reflected on over the centuries. I try to create tools out of those reflections.

I think that fundamentally good science and good neuroscience is about self-examination. Good human-computer interaction and good consumer electronics is about the creation of experience. Good art asks questions about both what it is to be human and what it is to experience. And so good art/science should be engaged in the generation of experiences, and the questioning of those experiences. And so it should put you in a space that’s enlivening and inspiring, but also a space that’s often unstable or uncomfortable. I’m interested in taking self-examination that exists in the neurosciences and putting it in an art context, so we can ask different questions using the tools of engineering to generate those experiences.

MIT’s a really exciting place to be doing art work because so much of the future comes out of here, and needs so much criticality, poking, play. But often an engineering space is a space that’s interested in moving forward as fast as possible, which is why bringing in someone like Agnieszka, who asks these questions about the tools that we’re already using, like MTurk, AI, swarm intelligence, is really fruitful for us. It’s a different sort of scholarship.

“Behind the Artwork” is an ongoing series in which MIT researchers who worked closely with CAST Visiting Artists share the personal stories, scientific insights and technological developments that went into developing their collaborative projects.